AR is a technology that allows people to superimpose digital content (images, audio, and text) over a real-world environment. When the game Pokémon Go made it possible to interact with Pokemon superimposed on the world via a smartphone screen in 2016, AR received much attention.

Augmented reality has grown in popularity since then. In 2017, Apple released its ARKit platform, and Google revealed web API prototypes later that year. Then there are Apple’s anticipated AR glasses, which will allow users to have AR experiences without glancing down at their phones.

In other words, augmented reality is on the point of becoming mainstream. You’ve come to the correct place if you’re still unsure what it is. In this article, we’ll look at what augmented reality is and how it may be used in the real world.

What is Augmented Reality (AR)?

The term “augmented reality” refers to the reality that has been supplemented with interactive digital components. Users may activate a smartphone’s camera, observe the actual world around them on the screen, and rely on an AR application to improve that environment in a variety of ways via digital overlays:

- Colors changing.

- Label placement.

- Using “filters” on Instagram, Snapchat, and other applications to change the person’s look or surroundings.

- Superimposing images, digital information, and 3D models.

- Featuring real-time instructions.

AR can be seen on various screens, glasses, mobile devices, and head-mounted displays.

It’s crucial to know what AR isn’t before you can understand it.

Unlike virtual reality, AR does not provide a fully immersive experience (VR). While virtual reality requires users to put on a special headgear and immerse themselves in a purely digital environment, AR allows them to interact with the actual world.

Types Of AR (Augmented Reality)

Marker-less AR, marker-based AR, projection-based AR, and superimposition-based AR are the four types of augmented reality. Let’s take a look at each one individually.

#1. Marker-based AR

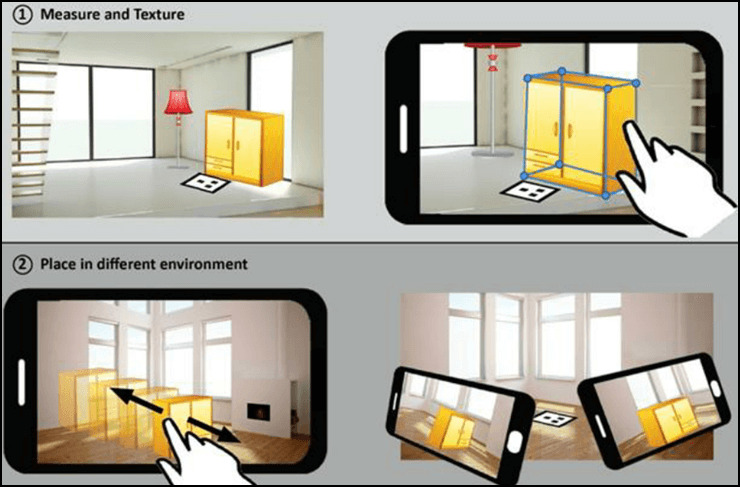

The 3D digital animations start with a marker, a unique visual item such as a distinctive sign or anything, and a camera. The system will calculate the market’s direction and position to efficiently place the content.

An example of marker-based AR is a mobile-based AR furnishing app.

#2. Marker-less AR

it’s used in events, business, and navigational apps, where location-based information is used to determine what content the user receives or discovers in a particular region. GPS, compasses, gyroscopes, and accelerometers, similar to those found in mobile phones, might be used.

The following example demonstrates how a Marker-less AR can position things in real-world space without the need for physical markers:

#3. Project-based AR

This kind detects the user’s contact with physical surfaces by projecting artificial light onto them. It’s used in holograms in Star Wars and other science fiction movies.

An example of a sword projection in an AR project-based AR headset is shown below:

#4. Superimposition-based AR

The original object is entirely or partially replaced with an augmentation. The IKEA Catalog app, for example, allows users to place a virtual furniture item over a room image with a scale.

IKEA is an example of AR-based on superimposition:

How Does AR Work?

The first step is to create pictures of real-world environments. The second method employs technology that permits 3D graphics to be superimposed over photos of real-world things. The third method uses technology to enable users to interact with and engage with the simulated environments.

Screens, eyewear, portable devices, mobile phones, and head-mounted displays can all be used to show AR.

Mobile AR, head-mounted gear AR, smart glasses AR, and web-based AR are examples of this. Headsets provide a more immersive experience than smartphones and other sorts of devices. Smart glasses are wearable augmented reality devices that enable first-person views without downloading an app.

AR glasses configurations:

In addition to other technologies, it uses S.L.A.M. (Simultaneous Localization And Mapping) technology and Depth Tracking technology to calculate the distance to the item using sensor data.

Augmented Reality Technology

Real-time augmentation is possible with AR technology, and it takes place in the context of the environment. Using animations, pictures, movies, and 3D models, users may examine objects in natural and manufactured light.

Visual-based SLAM:

Simultaneous Localization and Mapping (SLAM) technology combines algorithms for solving simultaneous localization and mapping difficulties.

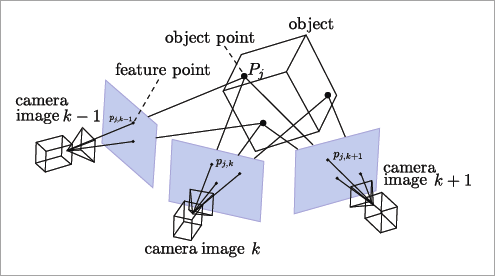

SLAM uses feature points to assist people in comprehending the physical world. Apps can grasp 3D objects and scenes thanks to this technology. It enables real-time tracking of the physical world. It also permits digital simulations to be superimposed.

SLAM uses a mobile robot to detect the surroundings, construct a virtual map, and track its location, direction, and route. For example, it’s used on drones, aerial vehicles, uncrewed vehicles, and robot cleaners, where it utilizes artificial intelligence and machine learning to understand locations.

Cameras and sensors capture feature points from diverse angles for feature detection and matching. The object’s three-dimensional position is then inferred using the triangulation technique.

SLAM helps slot and blend a virtual object into a real object in augmented reality.

Recognition-based AR:

It’s a camera that detects markers so that an overlay may be applied if one is detected. The gadget detects and calculates the position and orientation of the marking, then replaces it with a 3D copy. The position and orientation of others are then calculated. The entire object rotates when the marker is rotated.

Location-based Approach:

Data from GPS, digital compasses, accelerometers, and velocity meters are used to create simulations or visualizations. It is widely used in cell phones.

Depth tracking technology:

Depth map tracking cameras, such as the Microsoft Kinect, create a real-time depth map by calculating the real-time distance of objects in the monitoring area from the camera using various methods. The technologies isolate and analyze an object from a larger depth map.

Natural Feature Tracking Technology:

It might be used to track rigid objects in a maintenance or assembly task. A multistage tracking technique is used to predict an object’s motion more accurately. In addition to calibration approaches, marker tracking is utilized as an option.

The geometrical link between virtual 3D objects and animations and real-world items is used to overlay them. Extended face-tracking cameras are now available on devices with TrueDepth cameras, like the iPhone XR, allowing for enhanced AR experiences.

The Future of AR

You might also be curious about the technology’s prospects. The fact is that, as technology develops and a greater number of businesses adopt it, augmented reality has a bright future ahead of it.

According to Statista, the global market for augmented reality, virtual reality, and mixed reality (MR) is predicted to reach $30.7 billion in 2021, with over $300 billion in 2024.

AR and the Metaverse are two of the most significant advancements in the near future.

The Metaverse

The metaverse is a significant advance that Meta (Facebook’s new rebranding) will shortly reveal. It’s thought to be the next step in the internet’s evolution, bringing the physical and virtual worlds closer together.

People’s online avatars will be able to communicate with each other in practically every way possible in the metaverse, which will act as an upgraded social media platform. People will be able to use the metaverse through augmented reality experiences, virtual reality simulations, and other technology to:

- Try on clothes and accessories.

- Complete tasks in a virtual environment.

- Non-fungible tokens (NFTs) and other virtual products can be purchased and exchanged.

- Attend functions.

- And a lot more.