In recent years, language models like GPT-3.5 and GPT-4 have taken the AI world by storm, revolutionizing the way we interact with technology.

While GPT-3.5 paved the way for innovative chatbots, GPT-4 takes things to the next level with its multimodal capabilities and improved performance.

In this article, we’ll explore the key features of GPT-4 and the advancements it brings to the world of AI.

What is GPT-4?

GPT-4 is a new language model created by OpenAI, which is the fourth in the series of Generative Pre-trained Transformers (GPT). This new model was released on March 14, 2023, and will be available through an API as well as for ChatGPT Plus users.

As a transformer, GPT-4 was pre-trained to predict the next token in a sequence of text using both public data and “data licensed from third-party providers.” It then underwent fine-tuning using reinforcement learning techniques, incorporating feedback from both human and AI sources to ensure compliance with human alignment and policy.

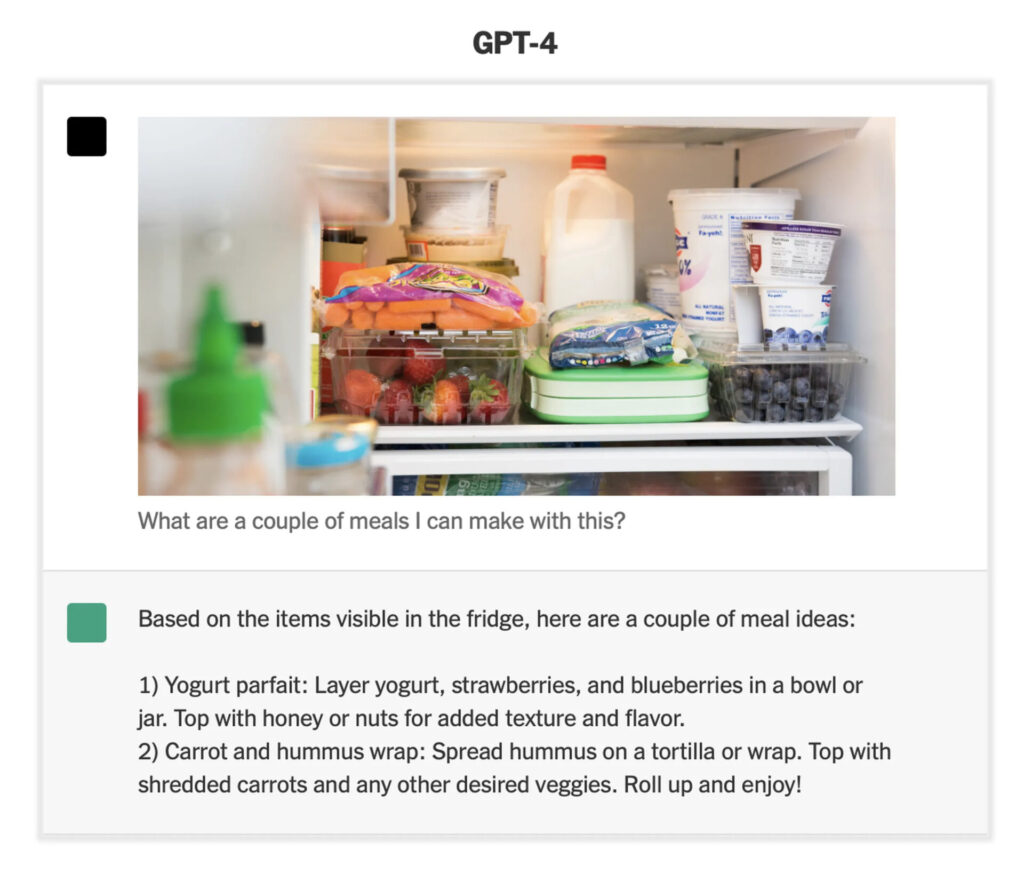

One notable feature of GPT-4 is its multimodal nature, meaning that it is capable of understanding and processing various types of data, including text, images, and audio. This enables it to generate responses that incorporate multiple modalities, making it more versatile and capable than previous GPT models.

What GPT-4 Can Do?

GPT-4 is a highly advanced language model that is capable of performing a wide range of tasks. According to OpenAI’s blog post, GPT-4 is more reliable, creative, and able to handle much more nuanced instructions than its predecessor, GPT-3.5.

One of the key improvements of GPT-4 is its ability to handle larger context windows, with two versions of the model offering context windows of 8192 and 32768 tokens, respectively. This is a significant improvement over GPT-3 and GPT-3.5, which were limited to context windows of 2048 and 4096 tokens, respectively.

GPT-4’s multimodal capabilities allow it to process and analyze data from multiple sources, including images, text, speech, and numerical data. This means that it can generate accurate and high-performing outputs for a wide range of applications and industries.

OpenAI has been tight-lipped about the technical details of GPT-4, but the model is known to use reinforcement learning techniques based on feedback from both human and AI sources. This allows it to fine-tune its responses to better align with human expectations and comply with relevant policies and regulations.

According to OpenAI CEO Sam Altman, the rumors about GPT-4 having 100 trillion parameters are “complete bullshit.” Nonetheless, GPT-4 is expected to be a significant improvement over GPT-3 in terms of performance and capabilities.

GPT-4’s advanced capabilities and improved security controls have garnered interest from government officials, with OpenAI CEO Sam Altman visiting Congress to demonstrate the model’s potential.

Additionally, Microsoft Germany’s CTO Andreas Braun announced that GPT-4 would offer “completely different possibilities” and include support for videos.

How To Access GPT-4?

According to the official OpenAI blog post, GPT-4 will be available via API and for ChatGPT Plus users, which guarantees subscribers access to the model for $20 per month.

However, there is a free alternative way to access GPT-4’s text capability, and that is by using Bing Chat. Microsoft confirmed that it had been using GPT-4 for its Bing search engine before the model’s official release.

Is there a GPT-4 API available?

Yes, a GPT-4 API is available for developers, but it is currently only accessible through a waitlist. Developers interested in using GPT-4 can join the waitlist by providing details about their intended use of the model, including whether they plan to build a new product, integrate it into an existing product, conduct academic research, or explore the model’s capabilities.

The waitlist also requires developers to share specific ideas they have for using GPT-4. Once accepted off the waitlist, developers will have access to GPT-4’s capabilities through the API.

GPT-4 vs. GPT-3.5: What’s The Difference?

The main difference between GPT-4 and its predecessor, GPT-3.5, lies in their respective capabilities. While GPT-3.5 is limited to processing text inputs, GPT-4 is multimodal and can use image inputs in addition to text. This means GPT-4 can provide more accurate and diverse responses compared to GPT-3.5, as it has a broader range of data types to draw from.

According to OpenAI, the difference between the two models will be subtle in casual conversation, meaning that the average user may not notice a significant difference in performance. However, GPT-4 is expected to be more reliable, creative, and intelligent than its predecessor, as evidenced by its higher performance on benchmark exams.

Key Features of GPT-4

GPT-4 has several key features that set it apart from its predecessors. Here are some of the most notable features of GPT-4:

Multimodal capabilities

One of the key features of GPT-4 is its multimodal capabilities. This means that GPT-4 can handle a variety of data types, such as image, text, speech, and numerical data. This is a significant advancement over previous models, such as GPT-3.5, which could only process text inputs.

The ability to process multiple types of data allows GPT-4 to provide more accurate and useful responses to complex queries.

For example, a user could ask GPT-4 to identify an object in an image and provide a description of its features. GPT-4 could also analyze a speech recording and provide a summary of its content.

Performance Improvements

GPT-4 offers several significant performance improvements compared to its predecessor, GPT-3.5. One of the most notable improvements is in factual correctness. GPT-4 has been shown to have a lower rate of “hallucinations,” where the model makes factual or reasoning errors, compared to GPT-3.5. According to OpenAI’s internal factual performance benchmark, GPT-4 scores 40% higher than GPT-3.5.

Another significant improvement is in “steerability,” which refers to the model’s ability to change its behavior according to user requests. GPT-4 is more flexible in this regard, allowing users to command it to write in different styles, tones, or voices. This feature makes it easier for users to achieve their desired outputs and can be particularly useful in creative applications.

Moreover, GPT-4 is better at adhering to guardrails, which are rules that restrict the model’s behavior to prevent it from doing something illegal or unsavory. GPT-4 is more effective at refusing such requests than GPT-3.5.

GPT-4 Test Scores

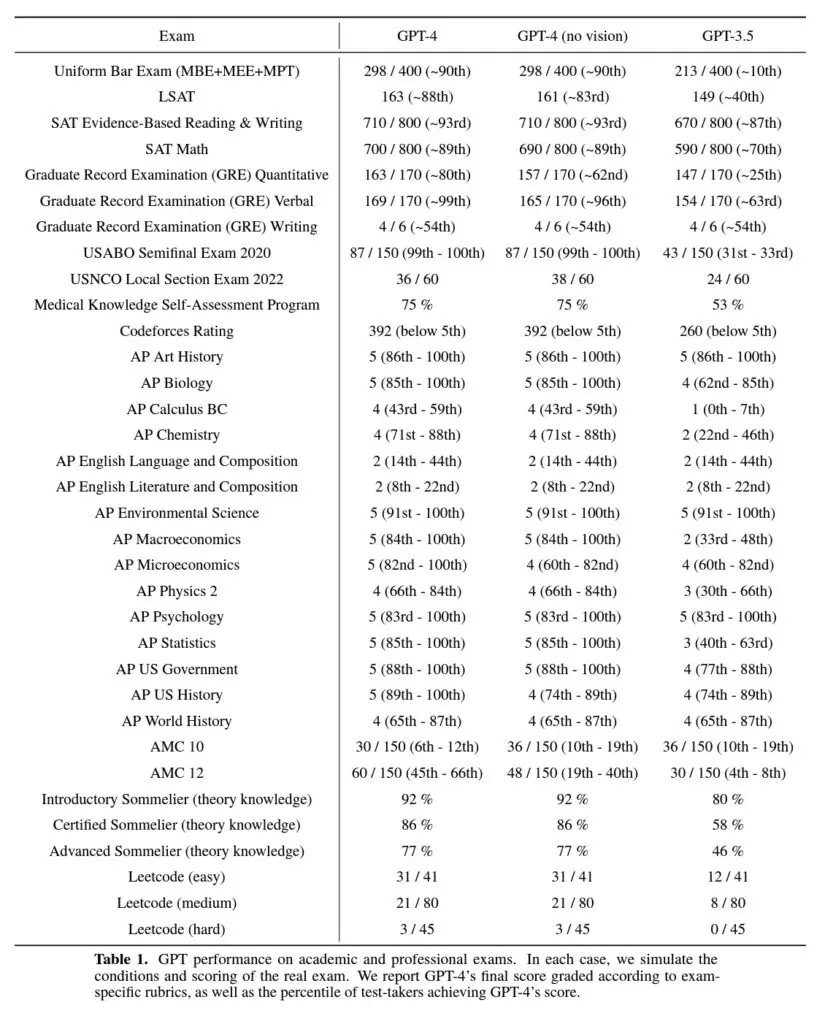

OpenAI has released GPT-4’s test scores on various academic exams, and the results indicate significant performance improvements over its predecessor, GPT-3.5. GPT-4’s score is in the 90th percentile on the Uniform Bar Exam, a significant increase from GPT-3.5’s score of just above 50%. The LSAT, quantitative and verbal GREs and Medical Knowledge Self-Assessment Program also saw substantial improvements in GPT-4’s scores.

While GPT-4 performs well in basic reasoning and comprehension, it still struggles with creative thought, as demonstrated by its poor scores on the AP English Literature and Language exams and the GRE Writing exam, where there has been little improvement compared to GPT-3.5’s performance.

Conclusion

After exploring the capabilities of GPT-4, it’s evident that OpenAI has made significant strides in language modeling technology. The ability to process both text and visual inputs opens up new possibilities for developers, and the improvements in factual correctness, steerability, and adherence to guardrails are noteworthy.

Additionally, the impressive test scores achieved by GPT-4 demonstrate its strength in basic reasoning and comprehension. While it still struggles with creative thought, the model’s overall performance advancements are impressive.

As we look to the future, it’s exciting to imagine what ChatGPT-4 can accomplish and its impact on various fields.