In today’s information era, computers are utilized for many purposes in many walks of life. Computers can be found in a wide range of devices, including fighter jets, toys, and industrial robots. They have made man’s life easier and more comfortable.

Computers have a long history, beginning with basic designs in the early nineteenth century and progressing through the twentieth century to revolutionize the world.

This article will cover the comprehensive history of computers from the early ages to the modern era. Read on if you’re interested in knowing the history of computers.

Table of contents

Computing Devices;

All machines that can perform computations are referred to as “Computing Devices.” These computations might vary from anything as basic as adding two integers to something as sophisticated as operating a large retail mall’s stock control system. Computers are said to be the world’s fastest computational machine.

A computing device can conduct calculations or assist in computations. There are two types of computer devices: early and modern computing devices.

Early Computer Devices;

The primitives were the first to utilize a counting device. Sticks, stones, and bones were used as counting tools. As human intellect and technology progressed, more computer devices were created. The following are some of the most common computer devices, from the earliest to the most modern.

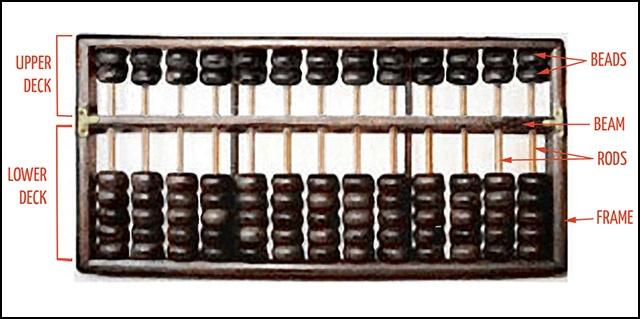

Abacus:

The abacus, which originally appeared in Asia some 5,000 years ago and is still in use today, is said to be the first computer. This system uses a method of sliding beads organized in a rack to allow users to perform calculations. It was a wooden rack with metal rods on which beads were attached.

To conduct arithmetic computations, the abacus operator moved the beads according to specified guidelines. Some countries, such as China, Russia, and Japan, still use an abacus.

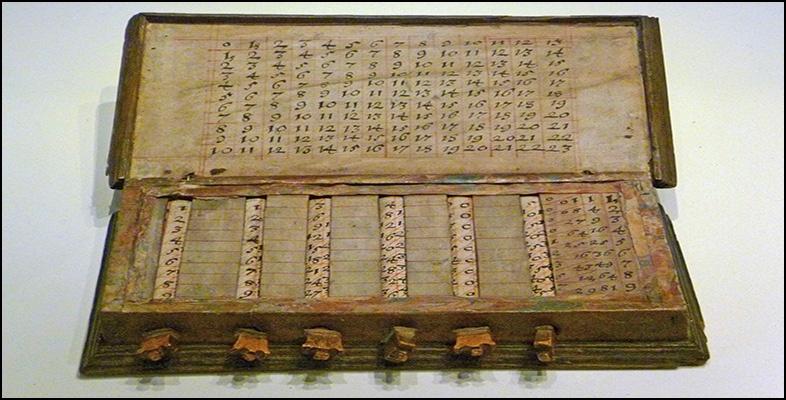

Napier’s Bones:

It was a manually driven calculating apparatus designed by Merchiston’s John Napier (1550-1617). He used nine separate ivory strips or bones imprinted with numerals to multiply and divide this calculating utensil. As a result, the tool was called “Napier’s Bones.” The decimal point was also used for the first time on this system.

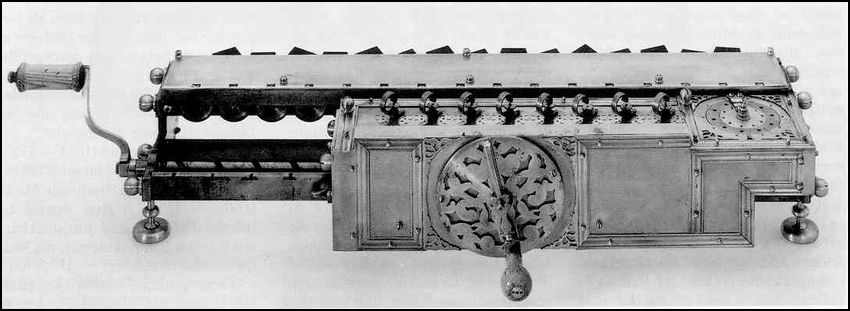

Leibniz Calculator:

Gottfried Wilhelm Von Leibniz, a German mathematician, and philosopher invented the computing machine in 1964 out of gears and dials that could add, subtract, and multiply. A set of gears and dials powered Leibniz’s mechanical multiplier.

Pascaline:

Arithmetic Machine or Adding Machine are other names for Pascaline. Blaise Pascal, a French mathematician, and philosopher invented it between 1642 and 1644. It’s said to be the world’s first mechanical and automated calculator. Pascal created this device in order to assist his father, who is a tax accountant.

It was limited to addition and subtraction. It was a wooden box with gears and wheels inside. When one wheel turns one revolution, the next wheel rotates as well. To read the totals, a series of windows are provided on the top of the wheels.

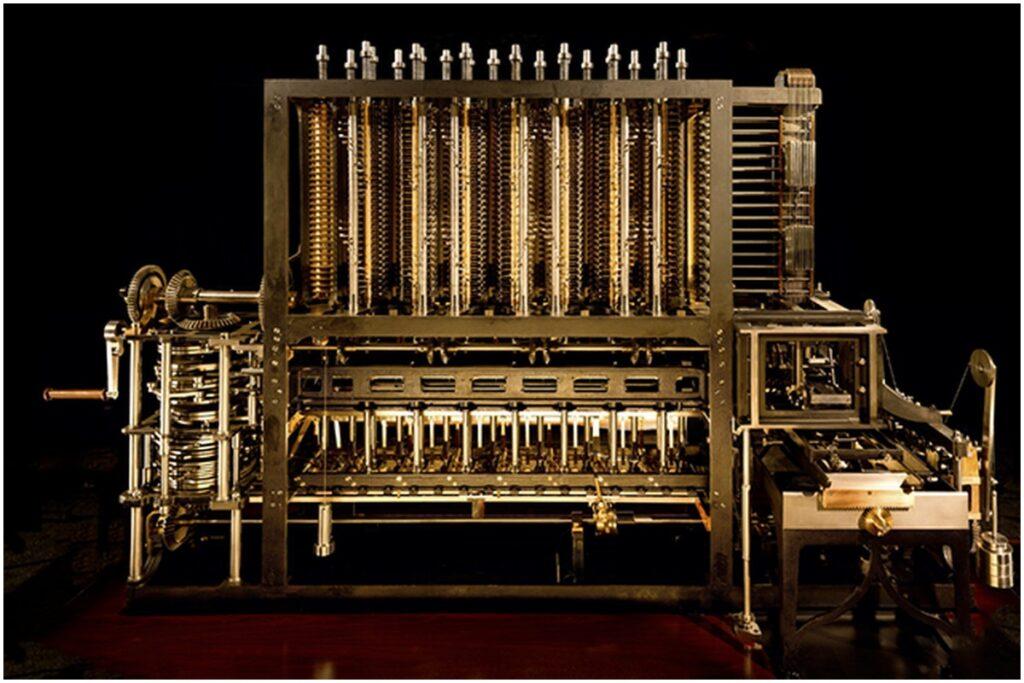

Difference Engine:

Charles Babbage, an English professor of mathematics, is considered the father of modern computers. Charles Babbage conceived the Difference Engine in 1822 as a mechanism for solving differential equations.

Difference Engine is a mechanical calculator that automatically tabulates polynomial functions.

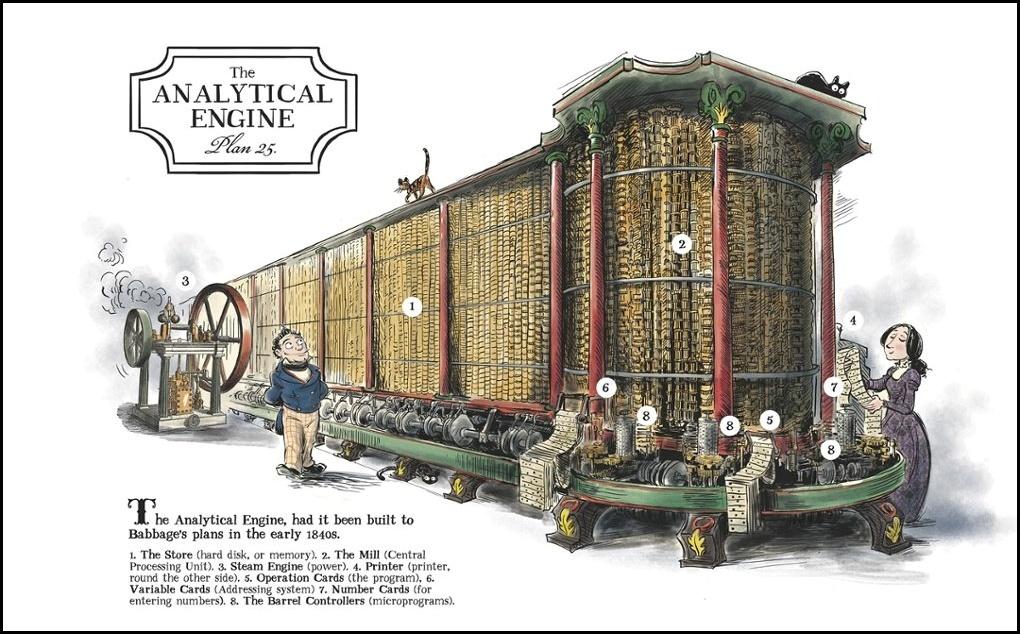

Analytical Engine:

After working on the Difference Engine for ten years, Babbage was immediately inspired to begin work on the first general-purpose computer, which he termed the Analytical Engine.

Analytical Engine was a mechanical computer that used punch cards as input. It has the ability to solve any mathematical problem and store data in permanent memory.

Tabulating Machine:

Herman Hollerith, an American statistician, developed it in 1890. It was a punch-card-based mechanical tabulator. It could compile statistics and store or organize data or information. This machine was used in the United States Census of 1890. In 1924, Hollerith founded Hollerith’s Tabulating Machine Company, which ultimately became International Business Machine (IBM).

Differential Analyzer:

In 1930, it was the first electrical computer to be released in the United States. Vannevar Bush created it as an analog device. Vacuum tubes are used in this machine to switch electrical impulses and do calculations. In a few minutes, it could do 25 calculations.

ADA LOVELACE: The First Computer Programmer:

Except for a young girl of unique background and education, the distinction between calculator and computer was not evident to most people in the early nineteenth century, even to intellectually adventurous attendees at Babbage’s soirees.

Augusta Ada King, Countess of Lovelace, was the daughter of Lord Byron and Anne Milbanke, a mathematically oriented woman. Augustus De Morgan, a distinguished mathematician, and logician was one of her instructors.

Lady Lovelace helped achieve this goal by attending Babbage’s parties and enthralling his Difference Engine. She also wrote to him and asked him pointed questions. However, his proposal for the Analytical Engine piqued her interest.

She had mastered it well enough by 1843, when she was 27 years old, to write the final paper describing the device and illustrating the fundamental contrast between it and existing calculators. She claimed that the Analytical Engine went beyond arithmetic. It made “a link…between the activities of matter and the abstract mental processes of the most abstract part of mathematical research” since it worked with generic symbols rather than numbers. It was a physical device with the ability to function in the domain of abstract thinking.

Lady Lovelace was correct in reporting that this was not just something no one had created before but also something no one had ever imagined. She went on to become the world’s first computer programmer and the world’s sole specialist in the method of sequencing instructions on the punched cards utilized by the Analytical Engine.

Generation Of Computers;

The improvements or advancements in computer technology are referred to as “computer generation.” The circuitry has become smaller and more sophisticated with each successive generation than before. As a result, computer performance, speed, power, and memory have all improved. The whole history of computers can be broken down into five generations.

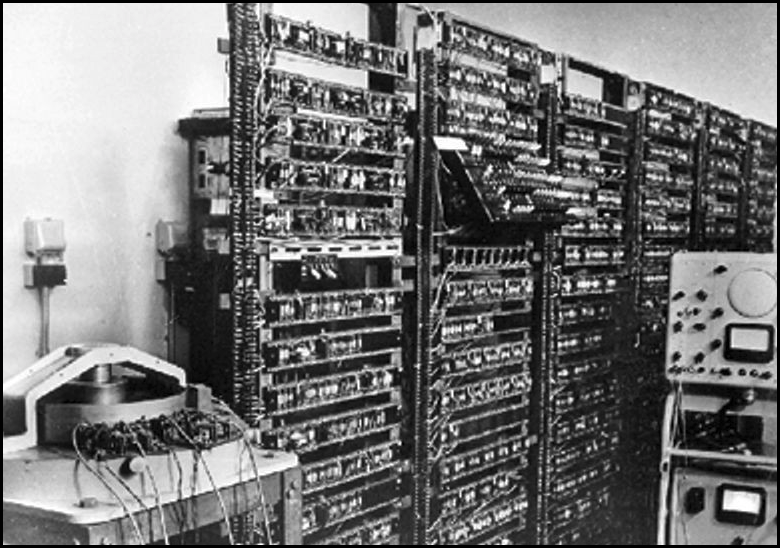

The First Generation: 1946-1958 (The Vacuum Tube Era)

In the initial generation of computers, vacuum tubes were used. Computers of the first generation were slow, costly, and often inefficient. The ENIAC (Electronic Numerical Integrator and Computer), which utilized thousands of vacuum tubes, was one of the first generation computers. It occupied a lot of space and produced a lot of heat.

In 1951, the United States Census Bureau obtained the first commercial computer, the UNIVAC.

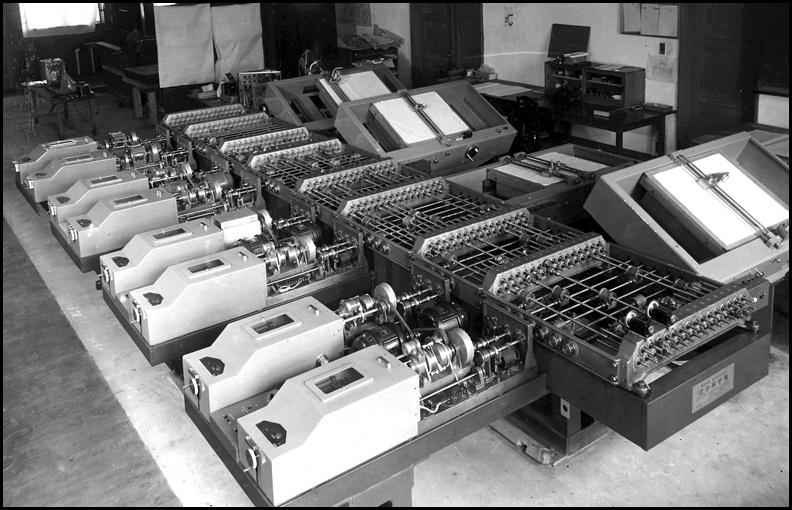

The Second Generation: 1959-1964 (The Transistor Era)

In the second generation of computers, transistors replaced vacuum tubes. A transistor is a device made of semiconductor material. It was developed at Bell Labs in 1947.

The transistor was quicker, more reliable, smaller, and less expensive to manufacture than a vacuum tube. About 40 vacuum tubes were replaced by a single transistor. Second-generation computers switched from machine to assembly languages, allowing programmers to define instructions in words.

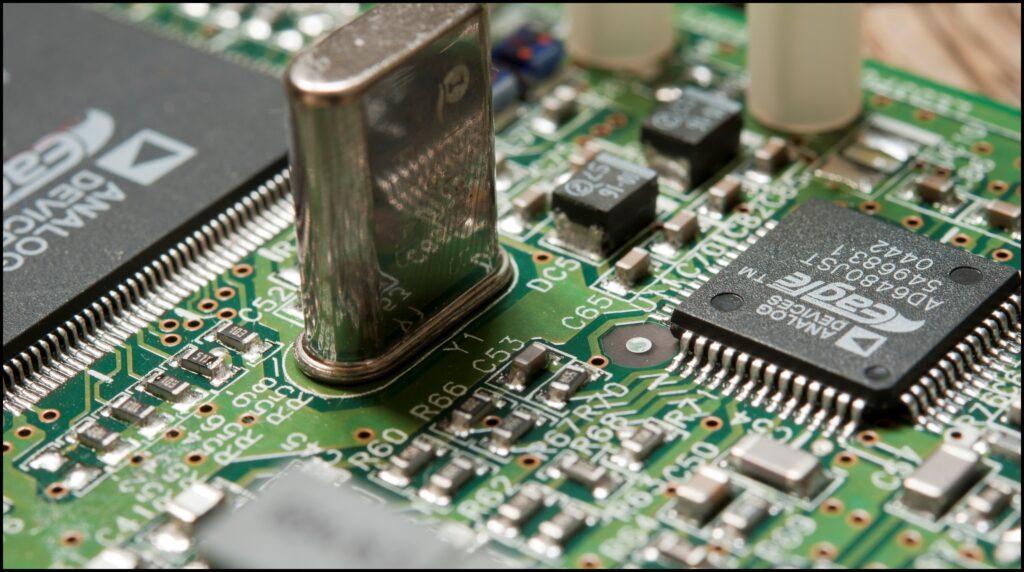

The Third Generation: 1965-170 (Integrated Circuits)

The third generation of computers was defined by the advancement of integrated circuits (ICs). Computers became faster and more efficient due to integrated circuits (ICs) on silicon chips, also known as semiconductors. IC’s were a huge step forward in computer technology.

The IC has a large number of transistors packed onto a single piece of water silicon. The introduction of integrated circuits dramatically decreased the size and cost of computers while also increasing their power.

Computers of the third generation could execute instructions in billionths of a second.

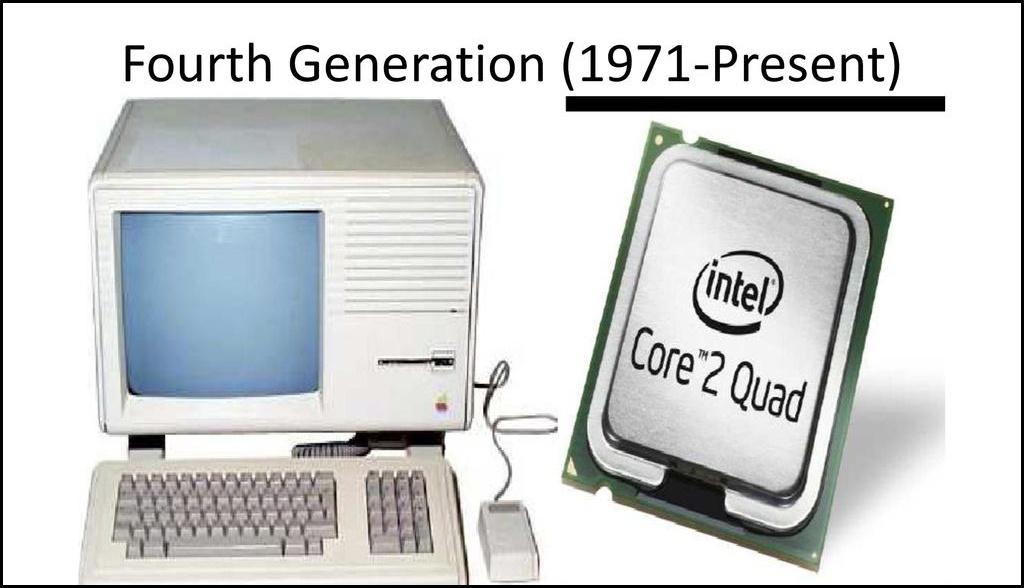

The Fourth Generation: 1971-Today (The Microprocessor Age)

Integrated circuits and the creation of the microprocessor are also hallmarks of the Fourth Generation (a single chip that could do all the processing of a full-scale computer). Computers can shift calculations and achieve quicker speeds by placing millions of transistors on a single chip.

It led to the invention of personal computers or microcomputers.

The Fifth Generation: Present and Beyond

Fifth-generation computers are still under development and are based on artificial intelligence. This generation’s key developments include robots and other intelligent systems. The primary goal of fifth-generation computing is to create machines that can learn and self-organize and respond to natural language input.

Types of Computers:

Computers can be classified into three categories based on their functionality.

Analog Computer:

Continuous data is processed and represented using analog computers. Temperature, pressure, voltage, and weight are examples of analog data. These computers or devices work with analog data and output analog values.

They continually take data and produce results at the same time. Analog computers are extremely fast. They can provide outcomes in a concise amount of time. However, their results are approximately accurate.

Analog computers are all special-purpose devices. The following are a few examples of analog computers or devices:

- Mercury Thermometer

- Voltmeter

- Simple Weighing Scale

- Barometer

Digital Computer:

With the use of digital or numbers, a digital computer depicts physical quantities. Discrete numbers are used in digital computers. Depending on the input they get from the user, these numbers are used to execute arithmetic calculations as well as make Logical judgments to reach a conclusion. Digital computers are multipurpose computers that can address a wide range of issues. These can be applied to nearly every aspect of life, including science and research, education, health, engineering, business, banking, markets, space, and airplanes.

They have high processing capabilities, enormous memory, and storage to handle and preserve data and information. Digital computers are available in a variety of designs and sizes.

They can be divided into four categories based on their size, speed, memory, and peripheral capability.

- Microcomputers

- Minicomputers

- Mainframe Computers

- Supercomputers

Hybrid Computers:

The term “hybrid computer” refers to a computer that combines digital and analog technology. The hybrid system combines the precision of analog computers with the greater control of digital computers and the capacity to take data in either form.

A typical hybrid computer takes analog signals, transforms them into digital, and processes them as digital-analog signals. Digital to analog and analog to digital converters are used to achieve this integration.

Hybrid machines are most commonly utilized in scientific applications, industrial process control, and robotics. They are also used in hospitals to monitor a patient’s heart rate, pulse rate, respiration, and brain activity, among other things.

Timeline of Computer’s History: (Milestone Achievements)

In this section, you’ll go through the timeline of the computer’s history, its milestones, and achievements.

- 16th Century – Abacus: The first First Mechanical calculator.

- 1617 – Napier’s Bones: It was also known as Rabdology, and it is used to calculate products and quotients of numbers.

- 1620-1630 – Slide Rule: Slipstick in the United States: It is a mechanical analog computer.

- 1642 – Pascaline: It was a complex system of gears that worked in the same way as a clock. Its sole purpose was to execute addition.

- 1672-1694 – Stepped Reckoner: The first calculator that could do all four arithmetic operations (addition, subtraction, multiplication, and division).

- 1801 – Jacquard loom: The weaving pattern utilized a card with holes—the first mechanical loom. Punch cards were first introduced.

- 1822 – Difference engine: It’s a mechanical calculator that tabulates polynomial functions automatically.

- 1832 – Analytical Engine: The general-purpose mechanical computer was the first of its kind.

- 1890 – Tabulating Machine: It was created to assist in processing data during the 1890 United States Census.

- 1935 – Z1: The world’s first fully programmable computer.

- 1944 – Mark I: The world’s first electromechanical computer.

- 1939 – ABC: Atanasoff Berry Computer: The world’s first fully automated electronic digital computer. It made use of the binary number system, which consists of 1s and 0s.

- 1946 – ENIAC: Electronic Numerical Integrator and Computer: The first electronic general-purpose computer.

- 1949 – EDSAC: Electronic Delay Storage Automatic Calculator: The computer was the first to store a program.

- 1949 – EDVAC: Electronic Discrete Variable Automatic Computer: With an ultrasonic serial memory, it was a binary serial computer with automated addition, subtraction multiplication, programmable division, and automatic checking.

- 1951 – UNIVAC: Universal Automatic Computer: The first commercially available general-purpose computer.

- 1947 – Transistors: It’s a semiconductor that replaces the vacuum tube. The IBM 650 was the first computer to use it.

- 1961 – ICs: Integrated Circuits: It can replace hundreds of transistors, and it was first utilized in the IBM 360.

- 1964 – Computer Mouse & graphical user interface (GUI): This represents the transition of the computer from a specialist machine for scientists and mathematicians to more widely available technology.

- 1970 – Microprocessors: Intel Corporation launched the Intel 4004 (four-thousand-four) in 1971 as a 4-bit central processing unit (CPU). It was the very first time.

- 1970 – Intel 1103: DRAM chip: The first DRAM (Dynamic Random Access Memory) chip.

- 1971 – Floppy Disk: It was a storage device. It had a square container with an 8-inch flexible magnetic disk.

- 1973 – Ethernet: Ethernet is a group of wired computer networking protocols widely used in local, metropolitan, and wide area networks.

- 1974-1975 – Personal Computer: Scelbi, Mark-8, Altair, IBM 5100: The earliest personal computers. The IBM 5100 is the first portable computer to be commercially available.

- 1976-1977 – Apple I, II: The Apple I was the world’s first single-circuit computer. The Apple Il features colorful graphics and a storage audio cassette drive.

- 1981 – MS-DOS (Disk Operating System): It introduces the general audience to an entirely new language. Typing and numerous obscure commands become a part of daily jobs as time goes on.

- 1981 – Acorn: IBM’s first personal computer.

- 1983 – 1984 – Lisa, Apple Macintosh: The first computer with a graphical user interface (GUI).

- 1985 – Microsoft Windows 1.0: Windows 1.0 is the first version of Microsoft’s operating system. Instead of inputting MS-DOS commands, you now use a mouse to navigate across windows by pointing and clicking.

- 1990 – HyperText Markup Language (HTML) & World Wide Web (WWW): The World Wide Web (WWWW) is a network of interconnected hypertext documents that can be accessed via the Internet. It’s also known as the World Wide Web or just the Web.

- 2003 – AMD’s Athlon 64: The world’s first 64-bit CPU.

- 2006 – MacBook Pro: The first dual-core Intel-based mobile computer.

- 2007 – iPhone: From here, the first Smartphone era begins.

- 2008 – Android: The T-Mobile G1 was the first Android device, and it had unique design aspects such as a swing-out keyboard and a “chin” on the back.

- 2011 – 3D transistors: Intel has announced the commercialization of three-dimensional transistors.

- 2015 – Ubuntu operating system: On February 11, 2015, the BQ Aquaris E4.5 Ubuntu Edition became the first phone to run the Ubuntu operating system.

Some Interesting Facts About Old Computers;

Wait! Before concluding the article, let me share some cool facts about computers.

- The ENIAC, the world’s first electronic computer, weighed almost 27 tons and occupied 1800 square feet.

- In 1980, the first 1GB hard disk drive was introduced, weighing around 550 pounds and costing $40,000.

- In 1936, Russians created a computer that functioned on water.

- A programming language designed explicitly by the US Dept of Defense for developing military applications was named Ada to honor her contributions towards computing.

The Concluding Note;

Huh!!! It was a really long history, right? Pat yourself on your back if you’ve reached the end of this article. I hope you’ve now some knowledge of the history of computers.

Please let us know in the comment section below if you think we’ve missed something. Also, you can suggest some edits to improve the article for the users.

Please share it with your friends and colleagues to appreciate our work.

Happy Learning 🙂

1 comment

Zabardast Sir G ❤️