Asymptotic analysis is a mathematical method used to analyze the performance of algorithms as they are scaled up to larger problem sizes. It is used to understand how the running time of an algorithm changes with increasing input size and to compare the performance of different algorithms.

Asymptotic analysis is a key tool in studying data structures and algorithms and is essential for making informed decisions about which algorithms to use in different cases.

The primary purpose of the asymptotic analysis is to evaluate an algorithm’s behavior for a large input size. To be more precise, if T(n) is the running time for an input of size n, we would like to know how T(n) behaves or grows at very large values of n. The term “asymptotic analysis” refers to analyzing an algorithm with large input.

In this tutorial, we will discuss the fundamentals of asymptotic analysis, examine its applications in data structure and algorithm design, and explore some standard asymptotic notations such as the Big-O notation, Theta notation and Omega notation that are used in algorithm analysis.

Asymptotic Notations

Asymptotic notations measure an algorithm’s efficiency by describing the number of resources (such as time or memory) needed to solve a problem. It compares algorithms based on the amount of time or memory it takes to execute. Asymptotic notations provide a way to describe the growth of a function as the input size tends toward infinity.

A basic mathematical function, such as n², n log n, etc., is commonly used to demonstrate an algorithm’s asymptotic behavior.

The most commonly used asymptotic notations are Big O, Big Ω, and Big Theta. In addition to these three, there are also asymptotic notations such as Little o, Big Omicron, and Big Gamma. These notations provide more precise descriptions of the complexity of algorithms.

In this tutorial, we will only discuss the Big-O Notation, Theta Notation, and Omega Notation.

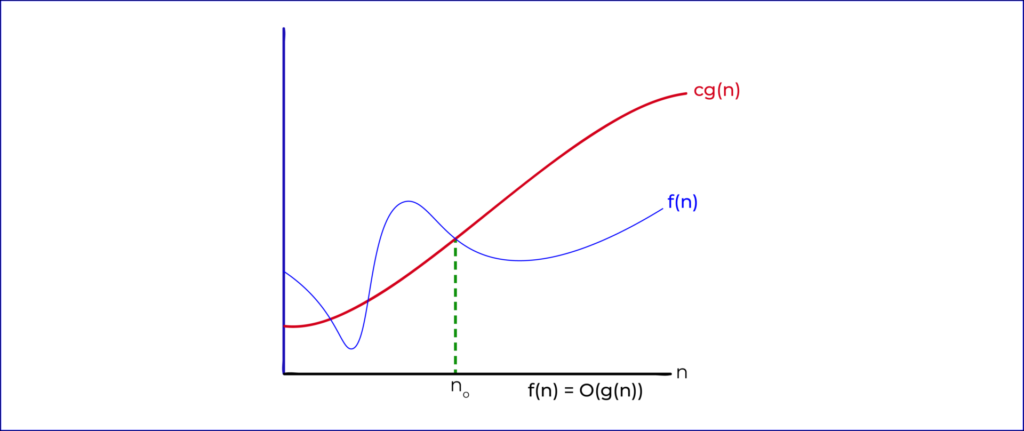

Big-O Notation

The Big-O Notation is a mathematical notation used to represent the complexity of an algorithm. It is used to describe the amount of time or memory space an algorithm requires to run and is often used to compare the efficiency of different algorithms. The Big-O Notation is based on the worst-case scenario, meaning that the algorithm will take the longest amount of time or memory space to run.

The Big-O Notation is represented by a mathematical equation, O(n), where n represents the input size or the number of operations. The lower the number, the more efficient the algorithm is. Common Big-O Notations include O(1), O(log n), O(n), O(n log n), O(n2) and O(2n).

- O(1) is known as constant time and refers to an algorithm that always takes the same amount of time to run, regardless of the input size. An example of this would be a simple algorithm to find the maximum value in an array.

- O(log n) is known as logarithmic time and refers to an algorithm that takes a logarithmic amount of time to run. An example of this would be a binary search algorithm.

- O(n) is known as linear time and refers to an algorithm that takes a linear amount of time to run. An example of this would be a linear search algorithm.

- O(n log n) is known as logarithmic time and refers to an algorithm that takes a logarithmic amount of time to run. An example of this would be a quicksort algorithm.

- O(n2) is known as quadratic time and refers to an algorithm that takes a quadratic amount of time to run. An example of this would be a bubble sort algorithm.

O(2n) is known as exponential time and refers to an algorithm that takes an exponential amount of time to run. An example of this would be a brute force algorithm.

Mathematical Representation of Big-O Notation

The mathematical representation of Omega Notation is expressed as:

O(g(n)) = { f(n): there exist positive constants c and n0

such that 0 ≤ f(n) ≤ cg(n) for all n ≥ n0 }The above mathematical representation of Big O Notation is a way to describe the upper bound of a function or algorithm’s growth rate. It states that for a given function g(n), there exists another function f(n) such that for any n greater than or equal to a certain constant n0, f(n) is always less than or equal to a certain constant c multiplied by g(n). This means that the growth rate of f(n) is bounded by the growth rate of g(n), and as a result, f(n) is considered to have a Big O Notation of g(n).

For example, consider an algorithm with the following running time:

f(n) = 10n² + 5n + 1We can express this algorithm’s complexity using Big O notation as O(n²). This means that the running time of the algorithm is upper bound by cn² for some positive constant c and for all n ≥ n0.

Worst-Case: The worst-case time complexity of the algorithm is O(n²) since it is upper bounded by cn² for some positive constant c.

Best-Case: The best-case time complexity of the algorithm is O(1) since it is upper bound by c for some positive constant c.

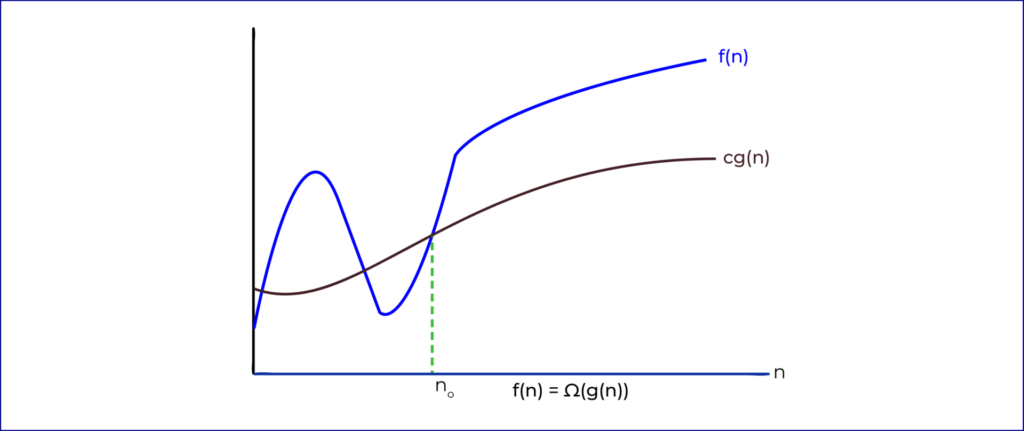

Omega Notation (Ω-notation)

The Omega Notation Ω-notation is an asymptotic notation used to describe the asymptotic behavior of functions. It is used to describe how the output of a function grows as its input grows. It is typically used to compare the relative performance of algorithms.

The Omega Notation describes the best-case running time of an algorithm, which is the minimum amount of time it takes to complete a given task. It is represented by the Greek letter omega Ω. This notation describes the lower bound of a function’s growth rate.

For example, if an algorithm takes at least n² steps to complete a task, then its running time could be expressed as Ω(n²). This means that the running time is at least n², but it could be more.

Another example is if an algorithm takes at least 2n steps to complete a task, then its running time could be expressed as Ω(2n). This means that the running time is at least 2n, but it could be more.

In general, the Omega Notation describes how a function’s output grows as its input grows. It is often used to compare the relative performance of different algorithms.

Mathematical Representation of Omega Notation

Omega Notation is a way of expressing the asymptotic lower bound of a function. It is represented by the Greek letter Ω (Omega). The mathematical representation of Omega Notation is as follows:

Ω(g(n)) = { f(n): there exist positive constants c and n0

such that 0 ≤ cg(n) ≤ f(n) for all n ≥ n0 }This mathematical representation states that Ω(g(n)) is a set of functions f(n) such that for any given function g(n) and constants c and n0, if 0 ≤ cg(n) ≤ f(n) holds true for all n ≥ n0, then f(n) is a member of the set Ω(g(n)). In other words, it is the set of all functions that are asymptotically greater than or equal to the given function g(n).

Let us take an example to understand this better. Suppose we have a function f(n) that represents the time some algorithm takes to execute. We can say that Ω(f(n)) is the set of all functions that represent the time taken for the same algorithm to execute, but with a time lower bound of c*f(n). This means that for any given function f(n) and constants c and n0, if 0 ≤ c*f(n) ≤ f(n) holds true for all n ≥ n0, then f(n) is a member of the set Ω(f(n)).

For this example, let’s look at the best, average, and worst-case scenarios. In the best case scenario, the time taken for the algorithm to execute is 0, which would mean that c*f(n) = 0 and f(n) = 0, and thus f(n) is a member of the set Ω(f(n)). In the average case scenario, the time taken for the algorithm to execute is some positive constant c*f(n), and thus f(n) is a member of the set Ω(f(n)). In the worst-case scenario, the time taken for the algorithm to execute is some large positive constant, and thus f(n) is a member of the set Ω(f(n)).

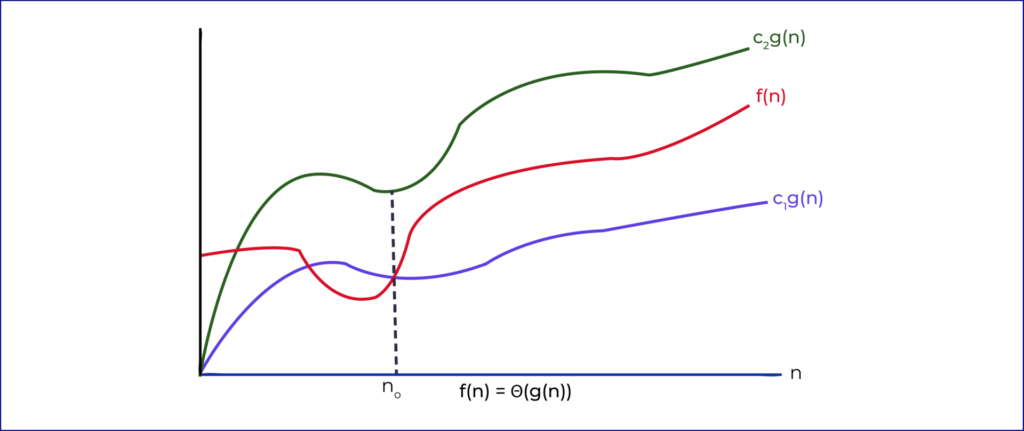

Theta Notation (Θ-notation)

Theta notation is a way of expressing the growth rate of an algorithm. It is used to classify algorithms according to how they respond to changes in the input size. It is a measure of the upper and lower bounds on an algorithm’s running time relative to the input size.

To describe an algorithm with theta notation, the number of operations is expressed as a function of the size of the input. For example, a linear search algorithm requires a number of operations proportional to the size of the input array. This can be expressed using theta notation as Θ(n), where n is the size of the input array.

Theta notation can be used to compare algorithms. For example, a linear search algorithm may take Θ(n) time, while a binary search algorithm may take Θ(log n) time. This means that the binary search algorithm is more efficient than the linear search algorithm, as the time taken by the binary search algorithm increases more slowly as the size of the input increases.

It is mainly used to analyze the average-case complexity of an algorithm since it represents the upper and lower bounds of the running time of an algorithm.

Mathematical Representation Of Theta Notation

The mathematical representation of theta notation is:

Θ (g(n)) = {f(n): there exist positive constants c1, c2 and n0

such that 0 ≤ c1 * g(n) ≤ f(n) ≤ c2 * g(n) for all n ≥ n0}

For example, let’s say we have an algorithm that takes n steps to complete. The time complexity of this algorithm can be expressed using theta notation as Θ(n). This means that there exists two positive constants, c1 and c2, and a minimum input size, n0, such that for all inputs of size n ≥ n0, the algorithm will take at least c1 * n steps and at most c2 * n steps to complete.

The average case for theta notation is the weighted average of the best and worst cases, which is (c1 + c2) / 2 * g(n). For example, if c1 is 2 and c2 is 4, then the average case is 3 * g(n).